Global Warming Prediction Project

About the Prediction Models

14.01.2010

Decision-making, whatever the field of human endeavour, requires formulation and a good understanding of what the problem is. To predict what may happen to a system under certain circumstances is often very difficult even for the simplest of systems, especially if they are not man-made. Humans have for centuries been seeking proxies for real processes. A substitute that can generate reliable information about a real system and its behaviour is called a model and they form the basis for any decision. It is worth building models to aid decision making, because models make it possible to:

-

‣identify the relationships between cause and effect (the subject of identification). This leads to a deeper understanding of the problem at hand by deriving an analytical relationship between them,

-

‣predict the respective objects can expect over a finite future time span (the subject of prediction), but also to experiment with models. Exactly the ability to make predictions about the future forms the core of intelligence at all. Our brain uses a memory-prediction model to make continuous prediction of future events in parallel across all our senses,

-

‣simulate the objects’ behaviour by experiment with models, and thus answer “what-if” questions (subject of simulation) essential to decision-making.

The world around us is getting more complex, more interdependent, more connected and global. We can observe it, but we cannot understand it because of its complexity and the myriad interactions that are impossible to know let alone foresee. Uncertainty and vagueness, coupled with rapid developments radically affect humanity. Though we observe these effects, we most often do not understand the consequences of our socioeconomical-ecological actions, the dynamics involved and the inter-dependencies of real-world systems in which system variables are dynamically related to many others, and where it is usually difficult to differentiate which are the causes and which are the effects.

We are facing these complex problems, which do need decision-making, but the means – the models – for understanding, predicting, simulating, and where possible controlling such systems are simply missing increasingly. This is more and more the situation with many real-world problems. To fill this essential gap, new and appropriate inductive self-organising modelling methods have been theoretically and practically developed as powerful tools in revealing the missing implicit relationships within complex systems.

KnowledgeMiner has been active in the field of self-organizing knowledge extraction from data for many years. In this project, we use parallel implementations of our proven predictive modelling technologies to model one part of the earth climate system - the temperature distribution and development on land and sea - from public, observed, historic data, exclusively. They reliably self-organize models from high-dimensional, noisy data sets. This is essential for climate modeling since it allows considering system states for modeling and prediction which are way back in time (very long time lags [up to 840 months = 70 years, actually]; the memory of the system). This easily leads to 10,000+ input variables which need to be processed and which still should result in valid models, i.e, models which do not just reflect some chance correlations.

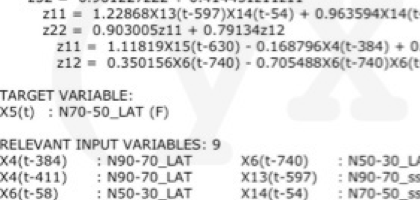

Two types of models is applied in this project to calculate monthly predictions: non-linear, dynamic regression models and non-parametric models using the concept of similar patterns. Regression models are explicitely described by a set of regression equations self-organized from data (image above) while nonparametric models are more fuzzy described by a set of patterns which are similar to past periods in time. More about the modeling technologies can be found here.

The objective of this project is doing monthly modeling and prediction of global temperature anomalies through self-organizing knowledge extraction from public data. The project is impartial and has no hidden personal, financial, political or other interests. It is entirely independent, transparent, and open in results.